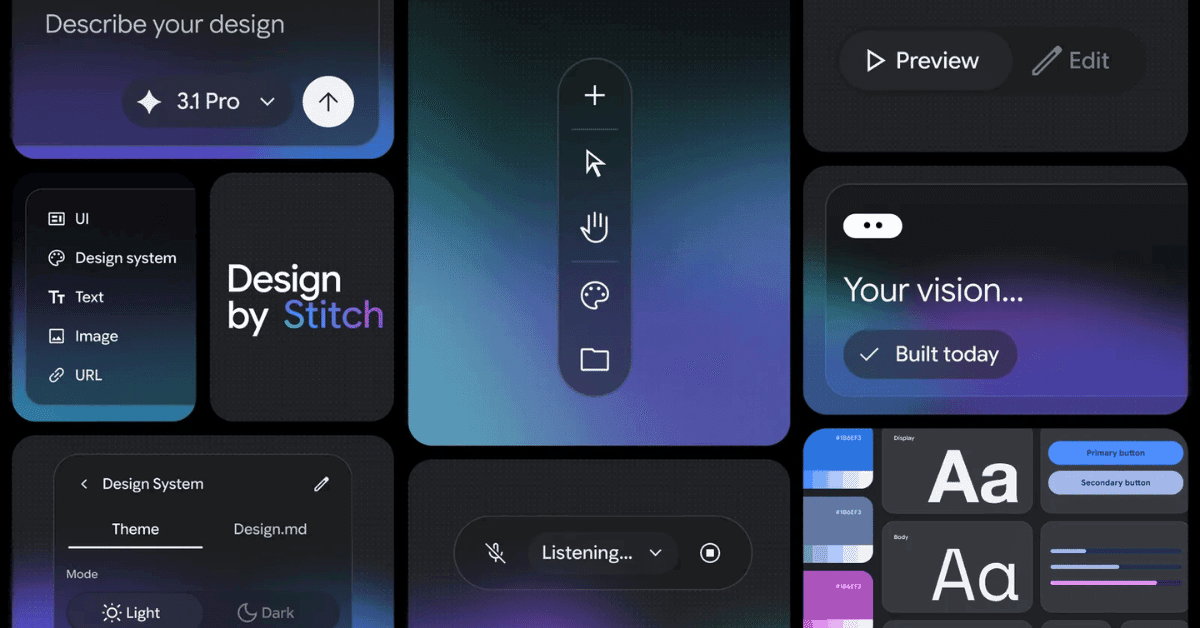

San Francisco: Google Labs has relaunched Stitch as a fully AI-native design canvas. Anyone can now describe a product idea, a business objective, or even a mood and Stitch will generate high-fidelity UI designs from it. No wireframes, No design tools, No prior experience required.

Vibe coding gave non-developers the ability to build software by describing what they wanted. Google is now applying the same logic to design.

The redesigned canvas is infinite and context-aware. Users can bring in images, text, or code as starting points. A new design agent reasons across the entire project history not just the current screen. And an Agent Manager lets designers work on multiple ideas in parallel without losing track of progress.

Three features stand out.

- DESIGN.md, an agent-friendly markdown file that lets users extract a design system from any URL and apply it across projects or export it to other tools.

- Instant interactive prototyping and static designs become clickable flows in seconds, with Stitch. Automatically generating logical next screens based on user interaction.

- Users can now speak directly to the canvas, asking for real-time critiques, color palette variations, or entirely new screens mid-conversation.

Stitch also connects to developer workflows via an MCP server and SDK, with export paths into Google AI Studio and Antigravity. Its Skills library has already accumulated 2,400 GitHub stars.

The broader context matters! Google AI Studio simultaneously launched a full-stack vibe coding experience this week, signalling that Google is building an end-to-end AI-native development pipeline from idea to design to working code, entirely within its own ecosystem.